When running a virtual infrastructure based on VMware vSphere, you have multiple techniques to create a high available environment. You can create a cluster, use VMware HA or FT but when the power fails you’re done. To buy us a little bit of time, you can add a UPS with enough capacity to power your servers, switches and storage for a limited period of time. Just enough to start a standby generator or just wait until the power returns.

But what when this takes too long and you must power down your virtual environment including all virtual machines?

When you’re on-site and aware of the power failure you can shut everything down manually of course but what when you’re not there, in the evening, during weekends, etc.? You return in the morning finding that all your virtual machines are down and corrupted?

You will have to automate the shutdown of the virtual machines and ESXi hosts and I found two ways to do this.

Option 1 (APC UPS):

7 out of 10 UPS-es I come across are APC UPS-es and APC has some nice management software to automated server shutdown.

To automate the shutdown of your virtual machines and ESXi hosts you will need:

To automate the shutdown of your virtual machines and ESXi hosts you will need:

- 0001APC PowerChute Network Shutdown (PCNS) 3.0.1;

- VMware Management Appliance (vMA) 5.1;

- TCP ports 3052, 6547, 80 and UDP port 3052 need to be open on the network.

First, install and configure the VMware Management Appliance on your VMware vSphere infrastructure. This is a basic VMware vSphere task, so I won’t bother you with details or screenshots but it comes down to this:

- Connect to your VMware vSphere environment using the vSphere client software or the vSphere web client;

- Select the host to which you want to deploy vMA in the inventory pane;

- Select Deploy from a file or URL if you have already downloaded and unzipped the vMA virtual appliance package;

- Click Browse, select the OVF, and click Next two times and accept the licence agreement;

- Specify a name for the virtual machine;

- Select an inventory location and a resource pool where the virtual machine will be placed;

- select the datastore to store the virtual machine on and select the required disk format and network mapping;

- Review the summary information and select Finish;

- The VMware Management Appliance will be provisioned in several minutes.

When the vMA is deployed, boot it and configure it. If you need help, check the vSphere 5.1 Documentation Center.

Important! Excluded the vMA from VMware DRS to make sure it is always hosted on the same server (last server in the server list, see below)

The next step is copying the PCNS files which you downloaded from the APC website to the vMA.

I used ‘/tmp/APC‘, to unzip the files use:

I used ‘/tmp/APC‘, to unzip the files use:

cd /opt/temp

sudo gunzip pcns301ESXi.tar.gz

sudo tar –xf pcns301ESXi.tar

sudo gunzip pcns301ESXi.tar.gz

sudo tar –xf pcns301ESXi.tar

There will now be a new directory named ESXi created in /tmp/APC, go to this directory and change the permissions of the ‘install_en.sh’ file so you can execute it and run the installer.

sudo chmod 777 install_en.sh

sudo ./install_en.sh

sudo ./install_en.sh

Follow the instructions, accept the license, select a installation path, add a target ESXi host, etc. When the installation is complete you can configure PCNS from your web browser.

In the PCNS Configuration wizard provide:

- Username and passwords to communicate with the APC’ Network Management Card (NMC) and to log in to PCNS;

- IP address to communicatie with the NMC;

- the UPS configuration, Single, Redundant, or Parallel;

Single: In a Single-UPS configuration, each computer server or group of servers is protected by a single UPS. That is, each server has one PCNS agent communicating with a single NMC installed on a UPS.

Redundant: In a Redundant-UPS Configuration, PowerChute Network Shutdown recognizes a group of either two or three UPS’s as a single UPS. In this configuration, one PowerChute Network Shutdown Agent on a server communicates with two or three NMCs (depending on the number of UPS’s in the configuration). Typically, the servers have multiple (dual or triple) power cords. Each UPS has its own NMC, which has a unique IP address.

Parallel: In a Parallel-UPS Configuration, two or more UPS’s support the load. Each UPS does not need to be capable of supporting the load on its own as the combined output of all UPS’s in the configuration share the load. However, depending on the size of the load there may be reserve UPS(s) available. Redundancy is provided if there is at least one more UPS than is required to support the load. - UPS detail like MNC protocol, port and IP address;

When done correctly the PCNS will register with the NMC.

The next step is to configure how PCNS responds to UPS events. For instance, configure to shutdown the ESXi host when the UPS capacity drops below 50%. Beware: make sure that the remaining capacity gives you enough time to shutdown all virtual machines and ESXi hosts.

When that’s done, configure what actions PCNS needs to perform when initiating a shutdown, notify users, run scripts, etc.

Now you’re done with the PCNS configuration. The next step left is to add all VMware ESXi hosts in your cluster to the server list to allow for the shutdown of multiple host by a single vMA. To do this run the following command:

sudo vifp addserver 10.218.45.20

Verify the server has been added

vifp listservers

Important! The address of the host running the vMA should always be last in the list.

Next, add the server to the fasspass list with the command:

vifptarget –s <server name or ipaddress>

To verify correct communications between the client and the host run the command:

Vicfg-nics –l

Now we are almost done, the last step is to configure the virtual machines to shutdown with the host server and configure the ESXi shutdown order. In the vSphere client or web client select the ESXi host configuration.

Select the Virtual Machine Startup/Shutdown link. Click properties at the top right and use “Move Up” to move the VMs in the order you want leaving the vMA VM at the top. Note that the startup order is the only one displayed. Shutdown uses the startup list in reverse. If you want the VMs to start on their own move them all the way up to Automatic Startup still leaving the vMA VM at the top.

Next make sure that all virtual machines have the VMWare Tools installed for a graceful shutdown to occur. Check the virtual machine configuration to make sure the settings for shutdown are correct. Right click the VM -> Edit Settings -> Options Tab -> VMWareTools. Under Power Controls Stop should be set to “Shut Down Guest”.

This will gracefully shutdown the VMs in the order you set before telling the ESXi host to power down. You should see the shutdown commands in the log start when connected to the ESXi host with the vSphere client. If the VM fails to gracefully shutdown check to make sure VMWare Tools is running on the VM under its Summary tab in vCenter.

Now you’re done and ready to enjoy your worry-free weekends.

Option 2 (other UPS):

I also found a more generic solution from Ralph Lueders. Ralph wrote a very nice script to shutdown VMware ESXi server simply by checking the state of a specific ESXi network interface. To make this work you will need to connect the selected interface to a switch which is not protected by a UPS. If the specific interface is down for a number of cycles, the VMware ESXi and the running virtual machines are shutdown.

First of all download the auto-shutdown.sh script (change the extension after download).

When running the script you can select the monitored interfaces (-i) and the the number of down cycles (-t). Try auto-shutdown.sh -v for further information.

To start monitoring on ESXi 4 hosts, add the following to ‘/etc/rc.local’.

/bin/kill $(cat /var/run/crond.pid)

/bin/echo “* * * * * /vmfs/volumes/datastore/control/auto-shutdown.sh -t 15 -i vmnic2″ >> /var/spool/cron/crontabs/root

/bin/busybox crond

/bin/echo “* * * * * /vmfs/volumes/datastore/control/auto-shutdown.sh -t 15 -i vmnic2″ >> /var/spool/cron/crontabs/root

/bin/busybox crond

To start monitoring on ESXi 5 hosts, add the following to ‘/etc/rc.local.d/local.sh‘ (before the exit 0)

/bin/kill $(cat /var/run/crond.pid)

/bin/echo “* * * * * /vmfs/volumes/datastore/control/auto-shutdown.sh -t 15 -i vmnic2″ >> /var/spool/cron/crontabs/root

/bin/crond

/bin/echo “* * * * * /vmfs/volumes/datastore/control/auto-shutdown.sh -t 15 -i vmnic2″ >> /var/spool/cron/crontabs/root

/bin/crond

This will check the state of vmnic2 every minute and if vmnic2 is down for 15 minutes, the ESXi host will shut down. You will have to apply this on every ESXi host connected to the UPS.

Don’t forget to execute ‘auto-backup.sh’ to save the changes.

We are almost done, like with the APC UPS, the last step is to configure the virtual machines to shutdown with the host server and configure the ESXi shutdown order. In the vSphere client or web client select the ESXi host configuration.

Select the Virtual Machine Startup/Shutdown link. Click properties at the top right and use “Move Up” to move the VMs in the order you want leaving the vMA VM at the top. Note that the startup order is the only one displayed. Shutdown uses the startup list in reverse. If you want the VMs to start on their own move them all the way up to Automatic Startup still leaving the vMA VM at the top.

Next make sure that all virtual machines have the VMWare Tools installed for a graceful shutdown to occur. Check the virtual machine configuration to make sure the settings for shutdown are correct. Right click the VM -> Edit Settings -> Options Tab -> VMWareTools. Under Power Controls Stop should be set to “Shut Down Guest”.

This will gracefully shutdown the VMs in the order you set before telling the ESXi host to power down. You should see the shutdown commands in the log start when connected to the ESXi host with the vSphere client. If the VM fails to gracefully shutdown check to make sure VMWare Tools is running on the VM under its Summary tab in vCenter.

Now you’re done and ready to enjoy your worry-free weekends.

Related Posts:

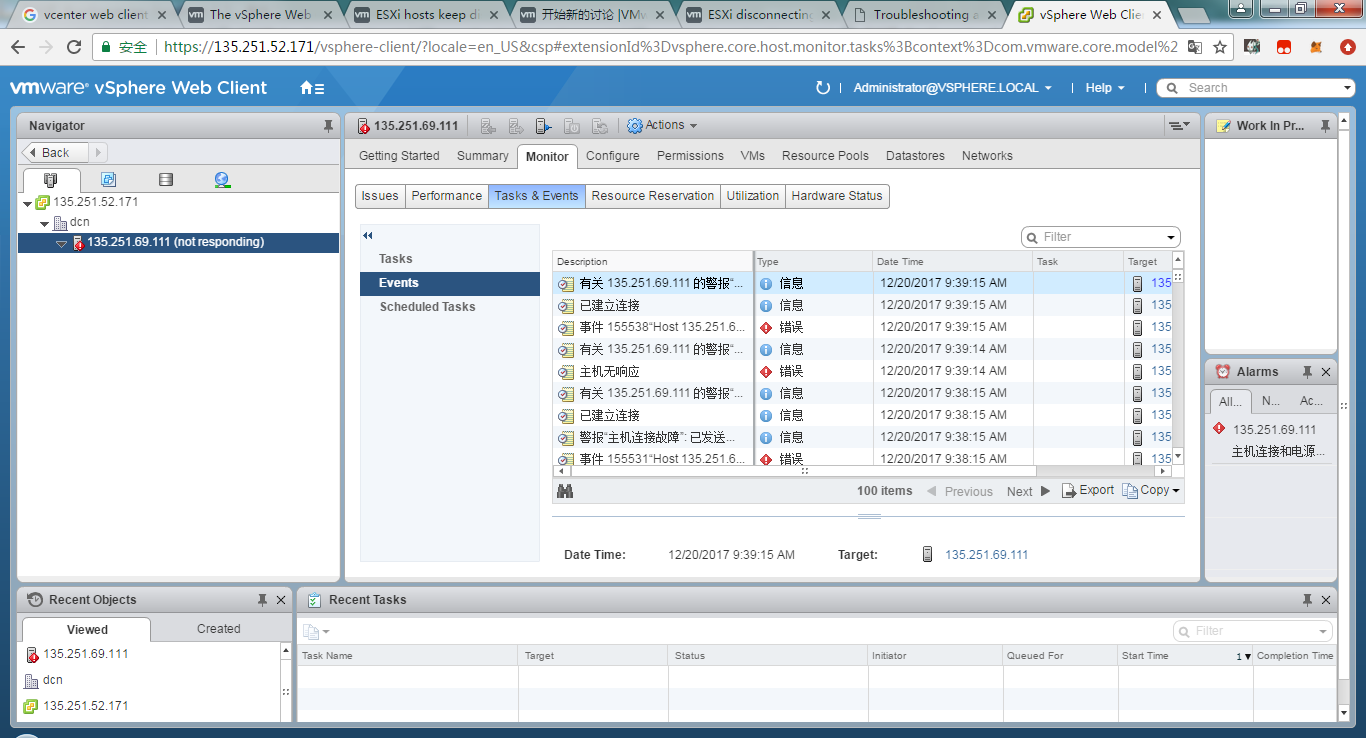

Last week I was facing a serious issue in my home lab where my esxi host is getting disconnected from my vCenter Server randomly. Whenever I am doing any configuration changes like enabling ssh or creating a new vSwitch the host got disconnected immediately. I was damn frustrated and was looking for a solution because it was very hard for me to work.

So I started troubleshooting by going through my vCenter log files and found following:

2 4 6 8 10 12 14 16 | 2015-03-16T23:06:11.270+05:30[06304info'vpxdvpxdMoHost'opID=BADE9DBF-0000007B-b1][HostMo]host connection state changed to[CONNECTED]forhost-35 2015-03-16T23:06:11.273+05:30[06304info'vpxdvpxdMoHost'opID=BADE9DBF-0000007B-b1][HostMo::SetComputeCompatibilityDirty]Marked host-35asdirty. 2015-03-16T23:02:09.628+05:30[04380info'vpxdvpxdHostCnx'opID=SWI-7e4a49e9][VpxdHostCnx]No heartbeats received from host5294adb1-584a-2f13-8987-7b52ed31c84bwithin120665000microseconds 2015-03-16T23:02:09.628+05:30[09928info'vpxdvpxdInvtHostCnx'][VpxdInvtHost]Got lost connection callback forhost-35 2015-03-16T23:02:09.629+05:30[05548info'commonvpxLro'][VpxLRO]--BEGIN task-internal-46--host-35--VpxdInvtHostSyncHostLRO.Synchronize-- 2015-03-16T23:02:09.629+05:30[05548warning'vpxdvpxdInvtHostCnx'][VpxdInvtHostSyncHostLRO]Connection notalive forhost host-35 2015-03-16T23:02:09.629+05:30[05548info'vpxdvpxdInvtHostCnx'][VpxdInvtHost::FixNotRespondingHost]Attempting tofix notresponding host host-35 2015-03-16T23:02:10.052+05:30[05548info'vpxdvpxdHostAccess']Got VpxaCnxInfo over SOAP version vpxapi.version.version9 forhost megatron.alex.local 2015-03-16T23:06:32.368+05:30[07760warning'Default']Failed toconnect socket;<io_objp:0x000000000a6fa038,h:3300,<TCP'0.0.0.0:0'>,<TCP'[::1]:32010'>>,e:system:10061(No connection could be made because the target machine actively refused it) 2015-03-16T23:06:33.369+05:30[07760warning'Proxy Req 00047']Connection tolocalhost:32010failed with error classVmacore::SystemException(No connection could be made because the target machine actively refused it). |

So I guess something wrong was happening related to heartbeat exchange between my host and vCenter server. I started my troubleshooting by following below steps:

1: Checked whether Esxi is able to reach my vCenter server or not by pinging and doing a telnet from Esxi host to vCenter Server on port 902

Note: Telnet command wont work in Esxi so you have to use “nc -z” command

So as you can see I was able to reach my vCenter from my Esxi host successfully.

2: Next I checked whether or not my Esxi host is listening on port 902 (heartbeat port)

The above command verified yes my host is listening on port 902

4: I added the host disconnection timeout string in Advance Settings of vCenter and increased the value to 120

Sonic 4 free download android. I verified once again that value has been added.

4: Next I check my vCenter Server for “Managed IP Setting”. Sometimes if the vCenter IP is not listed then also you can face this issue.

In my case I manually entered IP under Run Time Settings as shown in above image.

5: I checked the same settings on my Esxi host.

So from above image it is pretty clear that my Esxi host is configured to managed by correct vCenter server.

6: Next I checked for Heartbeat Port Value on my Esxi host by running the command:

# grep -i serverport /etc/vmware/vpxa/vpxa.cfg

The output which I got was something strange as my Esxi host was using port 922 for heartbeats exchange instead of using default port 902.

According to VMware KB Article 2040630 Policenauts pc-98 fan translation in english.

This issue is caused by dropped, blocked, or lost heartbeat packets between the vCenter Server and the ESXi/ESX host. If there is an incorrect configuration of the vCenter Server managed IP address, the host receives the heartbeat from vCenter Server but cannot return it.

It is important to remember that the default heartbeat port is UDP 902, and these packets must be sent between vCenter Server and the ESXi/ESX host for the host to stay connected and remain in the vCenter Server inventory.

I changed the port to 902 by editing the vpxa.cfg file and removed and added back my Esxi host to vCenter Server and hoped that my issue is now resolved. But surprisingly I was still getting the disconnection problem. Once again I connected my Esxi host using ssh and checked vpxa.cfg file and found the port has been again changed to 922. This was strange.

On digging more I found that this is happening because of heartbeat port specified as 922 in the registry key of vCenter server. I got this clue from one of the issue 2437489 posted in VMware Community group.

The full registry key is :

HKEY_LOCAL_MACHINESOFTWAREVMware, Inc.VMware VirtualCenter

As you can see in above image the heartbeat port is 922 which is causing all the troubles. I changed it to 902 and restarted my vCenter Server and bingo my issue is resolved.

Hit Like and share on social media if above information is helpful to you. Happy Learning!!!

Migrating and Upgrading physical systems is night mare, However with VMWare vSphere its not that complicated, a proper planning will always leads to successful migration from vSphere 5.x to 6.x without any downtime for the VM’s. Some cases might need downtime for VM’s which is described in this post. I would recommend to go through the complete post as this is the same process or approach for all the vSphere migrations irrespective of the versions.

vSphere 5.0 and 5.1 support already ended on 24 August 2016, vSphere 5.5 support going to end on 19 sept 2018. But there are many environments still running on 5.0 and 5.1 as well. Its the high time to upgrade to latest vSphere 6.x now. We will detail every single step to be considered for a successful migration in this post.

Know the Existing Environment

Esxi Host 6.5 Disconnected Every Minute 10

For the successful migration we need to know the existing environment completely. Gathering the existing vSphere environment details is very important. Thanks to RVTools which will do this in just couple of minutes. After getting the information from RVTools we need to analyze the info and gather below information.

- Existing vCenter Version and Build no

- Existing ESXi Hosts Version and Build no

- Existing Server hardware model, Make along with NIC and HBA cards info.( this is important if same hardware is used for upgrade)

- Standard switch or Distributed switch in use with its port groups, uplinks and vLan details

- Cluster information with HA, DRS rules

- EVC Mode information and server maximum supported EVC mode.

- All VM’s Name,OS, IP, port group, Datastore details

- Hardware version of all VM’s, VMWare tools installation status.

- VM RDM Lun details – with the SCSI ID mapping for each lun, RDM type (physical/virtual) , pointer location.

- USB drive mapping for VM’s if any.

- Integrations with vCenter server like backup, SRM and other.

Verify compatibility and upgrade matrix

Verifying the VMWare products compatibility and hardware compatibility is very important.

VMWare products compatibility can be verified here.

Hardware compatibility can be verified here.

Hardware & Storage compatibility

Its very important to check the Servers, Storage, NIC Cards and HBA Cards compatibility before planning for the upgrade or implementation of ESXi. NIC and HBA compatibility is covered in drivers section below.

Verifying Hardware compatibility & BIOS firmware

Open vmware compatibility guide – Just select the Partner Name (vendor) – in the keyword provide server Model – click update and view results.

Search for the exact server Model with CPU as shown below and find if the desired ESXi version is compatible or not. As shown below One model supports till 6.5 U1 while other supports 6.7 as well.

Click on the ESXi version to find supported hardware firmware details.

Recommended BIOS and hardware firmware details will be shown as below. Install them before installing ESXi.

Verifying Storage and SAN devices compatibility and Drivers

Open the vmware compatibility guide – select the Storage/SAN as shown below.

Select the Vendor and provide the storage model in keywords – click update

Search for the Exac storage Model – Select the storage and click on the ESXi Version

Note: if an ESXi version is not showing in the list, its either not supported or not yet validated by vmware.

All the supported drivers and firmwares list will be shown for the storage.

vCenter to ESXi compatibility

vCenter server support with ESXi host is very important when it comes to migration as most of the cases migration will happen while VM’s are up and running on existing ESXi hosts.

- ESXi 5.0 or 5.1 with updates cannot be directly upgraded to 6.5 an intermediate upgrade to 5.5 or 6.0 is required.

- ESXi 5.5 or later can be directly upgraded to 6.5 or U1

- ESXi 5.x cannot be directly upgraded to 6.7 an intermediate upgrade to 6.0 is required.

- ESXi 6.0 or later can be directly upgraded to 6.7

Supported vCenter Upgrade Path

- vCenter 5.0 or 5.1 with updates cannot be directly upgraded to 6.5 an intermediate upgrade to 5.5 or 6.0 is required.

- vCenter 5.5 or later can be directly upgraded to 6.5 or U1

- vCenter 5.x cannot be directly upgraded to 6.7 an intermediate upgrade to 6.0 is required.

- vCenter 6.0 or later can be directly upgraded to 6.7

Supported ESXi Upgrade Path

- ESXi 5.0 or 5.1 with updates cannot be directly upgraded to 6.5 an intermediate upgrade to 5.5 or 6.0 is required.

- ESXi 5.5 or later can be directly upgraded to 6.5 or U1

- ESXi 5.x cannot be directly upgraded to 6.7 an intermediate upgrade to 6.0 is required.

- ESXi 6.0 or later can be directly upgraded to 6.7

Decide the vSphere 6.x version to be upgraded to

Based on the available Hardware either new or reusing existing hardware , its compatibility verified as described in above new vSphere 6.x version and Build no needs to be decided. Suppose if the Hardware is compatible with ESXi 6.5 U1. Then vCenter and ESXi upgrades needs to planned for vSphere 6.5 U1, the detailed steps are listed below.

Note that not just hardware, but NIC cards, HBA cards compatibility is also very important if you are reusing existing or new hardware.

vSphere 6.x License and Support

VMWare vSphere license for ESXi and vCenter is based on version , meaning to say if you are an existing customer having ESXi 5.x and vCenter 5.x license it will support only 5.x ( 5.0,5.1,5.5). However if you already have an SA , ESXi and vCenter 5 licenses can be upgraded to 6.x, contact your local vmware partner for support.

Supported Drivers & Firmware for Hardware

Once the vSphere version for the available hardware is decided, all the necessary drivers for NIC cards, FCOE, FC (HBA) and multi path needs to be decided.

Here will explain how to find the exact driver and download the driver for targeted ESXi version.

Support and Driver for Network NIC Cards

Step 1: Run below command to get all NIC cards available on the Host.

esxcli network nic list

Example:

[root@localhost:~] esxcli network nic list

Name PCI Device Driver Admin Status Link Status Speed Duplex MAC Address MTU Description

—— ———— —— ———— ———– —– —— —————– —- ——————————————————--

vmnic0 0000:01:00.0 bnx2 Up Down 0 Half a4:ba:db:0e:cc:9c 1500 QLogic Corporation QLogic NetXtreme II BCM5716 1000Base-T

vmnic1 0000:01:00.1 bnx2 Up Up 1000 Full a4:ba:db:0e:cc:9d 1500 QLogic Corporation QLogic NetXtreme II BCM5716 1000Base-T

[root@localhost:~] esxcli network nic list

Name PCI Device Driver Admin Status Link Status Speed Duplex MAC Address MTU Description

—— ———— —— ———— ———– —– —— —————– —- ——————————————————--

vmnic0 0000:01:00.0 bnx2 Up Down 0 Half a4:ba:db:0e:cc:9c 1500 QLogic Corporation QLogic NetXtreme II BCM5716 1000Base-T

vmnic1 0000:01:00.1 bnx2 Up Up 1000 Full a4:ba:db:0e:cc:9d 1500 QLogic Corporation QLogic NetXtreme II BCM5716 1000Base-T

Step 2: Get the Vendor ID (VID), Device ID (DID), Sub-Vendor ID (SVID), and Sub-Device ID (SDID) using the vmkchdev command:

vmkchdev -l |grep vmnic#

vmkchdev -l |grep vmnic#

Example:

[root@localhost:~] vmkchdev -l |grep vmnic0

0000:01:00.0 8086:10fb 103c:17d3 vmkernel vmnic0

[root@localhost:~]

[root@localhost:~] vmkchdev -l |grep vmnic0

0000:01:00.0 8086:10fb 103c:17d3 vmkernel vmnic0

[root@localhost:~]

Vendor ID (VID) = 8086

Device ID (DID) = 10fb

Sub-Vendor ID (SVID)= 103c

Sub-Device ID (SDID) = 17d3

Device ID (DID) = 10fb

Sub-Vendor ID (SVID)= 103c

Sub-Device ID (SDID) = 17d3

Step 3: Get the driver and firmware in use for NIC card

esxcli network nic get -n vmnic#

esxcli network nic get -n vmnic#

Example:

[root@localhost:~] esxcli network nic get -n vmnic0

Advertised Auto Negotiation: true

Advertised Link Modes: 10BaseT/Half, 10BaseT/Full, 100BaseT/Half, 100BaseT/Full, 1000BaseT/Full

Auto Negotiation: true

Cable Type: Twisted Pair

Current Message Level: 0

Driver Info:

Bus Info: 0000:01:00.0

Driver: bnx2

Firmware Version: 5.0.13 bc 5.0.11 NCSI 2.0.5

Version: 2.2.4f.v60.10

Link Detected: false

Link Status: Down

Name: vmnic0

PHYAddress: 1

Pause Autonegotiate: true

Pause RX: false

Pause TX: false

Supported Ports: TP

Supports Auto Negotiation: true

Supports Pause: true

Supports Wakeon: true

Transceiver: internal

Virtual Address: 00:50:56:56:6f:75

Wakeon: MagicPacket(tm)

[root@localhost:~] esxcli network nic get -n vmnic0

Advertised Auto Negotiation: true

Advertised Link Modes: 10BaseT/Half, 10BaseT/Full, 100BaseT/Half, 100BaseT/Full, 1000BaseT/Full

Auto Negotiation: true

Cable Type: Twisted Pair

Current Message Level: 0

Driver Info:

Bus Info: 0000:01:00.0

Driver: bnx2

Firmware Version: 5.0.13 bc 5.0.11 NCSI 2.0.5

Version: 2.2.4f.v60.10

Link Detected: false

Link Status: Down

Name: vmnic0

PHYAddress: 1

Pause Autonegotiate: true

Pause RX: false

Pause TX: false

Supported Ports: TP

Supports Auto Negotiation: true

Supports Pause: true

Supports Wakeon: true

Transceiver: internal

Virtual Address: 00:50:56:56:6f:75

Wakeon: MagicPacket(tm)

Step 4: Find supported driver and download driver

Select IO Devices in the vmware compatibility guide then provide all the VID, DID SVID, SDID got in step 2 – click on update and view results – All the supported ESXi versions for the NIC cards will show as below.

Vendor ID (VID) = 8086

Device ID (DID) = 10fb

Sub-Vendor ID (SVID)= 103c

Sub-Device ID (SDID) = 17d3

Device ID (DID) = 10fb

Sub-Vendor ID (SVID)= 103c

Sub-Device ID (SDID) = 17d3

Click on the required driver for ESXi version as shown below beside the NIC driver say 6.5 U1

Expand the driver version and the link to download the driver will be shown as below.

Support and Driver for Storage HBA Cards

Step 1: Get Host Bus Adapter Driver currently in use

# esxcfg-scsidevs -a

Output will show something like vmhba0 mptspi or vmhba1 lpfc

Output will show something like vmhba0 mptspi or vmhba1 lpfc

Step 2: Get to HBA driver version currently in use

# vmkload_mod -s HBADriver |grep Version

# vmkload_mod -s HBADriver |grep Version

For example, run this command to check the mptspi driver:

# vmkload_mod -s mptspi |grep Version

# vmkload_mod -s mptspi |grep Version

Step 3: Get HBA Vendor ID (VID), Device ID (DID), Sub-Vendor ID (SVID), and Sub-Device ID (SDID) using the vmkchdev command:

vmkchdev -l |grep vmhba#

Example:

[root@localhost:~] vmkchdev -l |grep vmhba0

0000:01:00.0 1077:2031 0000:0000 vmkernel vmhba0

[root@localhost:~]

vmkchdev -l |grep vmhba#

Example:

[root@localhost:~] vmkchdev -l |grep vmhba0

0000:01:00.0 1077:2031 0000:0000 vmkernel vmhba0

[root@localhost:~]

Vendor ID (VID) = 1077

Device ID (DID) = 2031

Sub-Vendor ID (SVID)= 0000

Sub-Device ID (SDID) = 0000

Device ID (DID) = 2031

Sub-Vendor ID (SVID)= 0000

Sub-Device ID (SDID) = 0000

Step 4: Find supported driver and download driver

Select IO Devices in the vmware compatibility guide then provide all the VID, DID SVID, SDID got in step 3 – click on update and view results – All the supported ESXi versions for the NIC cards will show as below.

Vendor ID (VID) = 1077

Device ID (DID) = 2031

Sub-Vendor ID (SVID)= 0000

Sub-Device ID (SDID) = 0000

Device ID (DID) = 2031

Sub-Vendor ID (SVID)= 0000

Sub-Device ID (SDID) = 0000

Click on the required driver for ESXi version as shown below beside the HBA driver say 6.5 U1

verify the VID and all, Select the ESXi version – Expand the driver – download link will be given as shown below.

How to install/Update the Driver on ESXi

Upload the driver to the ESXi host. Use below command to install if driver not present or update if an old version of driver is present. Some cases you might need to remove the old driver if host is already having higher version of driver than supported. all the commands are given below.

Remove the existing VIB:

Find the vib name from below command:

esxcli software vib list

remove vib using the name of vib got from above.

esxcli software vib remove –vibname=nameofvib

Update VIB driver using below command:

esxcli software vib update -d “/vmfs/volumes/Datastore/DirectoryName/PatchName_VIBname.zip”

Install VIB driver using below command:

esxcli software vib install -d “/vmfs/volumes/Datastore/DirectoryName/PatchName_VIBname.zip”

esxcli software vib install -d “/vmfs/volumes/Datastore/DirectoryName/PatchName_VIBname.zip”

Migration Approach & Steps

vCenter Upgrade / Install

vCenter appliance has come long way and its very stable now. So no need to rely on windows base vCenter any more. Without any doubt vCenter appliance can be used. However based on the environment, Size , integration with other vmware products vCenter topologies will differ. All the supported vCenter topologies can be found here, However there are three most commonly used topologies are high lighted below.

VCenter Topologies

Standard Topology 1: For Small deployments with 5-10 Hosts if there are no integrations with other vmware products like NSX or VRA , Embedded is the best topology.

1 Single Sign-On domain

1 Single Sign-On site

1 vCenter Server with Platform Services Controller on same machine

Limitations

Does not support Enhanced Linked Mode

Does not support Platform Service Controller replication

1 Single Sign-On site

1 vCenter Server with Platform Services Controller on same machine

Limitations

Does not support Enhanced Linked Mode

Does not support Platform Service Controller replication

Standard Topology 2:For Medium to large deployments with integrations with other vmware products or multiple vCenter servers for different purposes like one vCenter for production hosts another for VDI hosts. below is the best topology.

1 Single Sign-On domain

1 Single Sign-On site

2 or more external Platform Services Controllers

1 or more vCenter Servers connected to Platform Services Controllers using 1 third-party load balancer

1 Single Sign-On site

2 or more external Platform Services Controllers

1 or more vCenter Servers connected to Platform Services Controllers using 1 third-party load balancer

Standard Topology 3: For Medium to large deployments with DR, integrations with other vmware products and multiple vCenter servers below is the best and recommended topology.

1 vSphere Single Sign-On domain

2 vSphere Single Sign-On sites (Prod , DR site)

2 or more external Platform Services Controllers per Single Sign-On Site ( 2 in Prod, 2 in DR)

1 or more vCenter Server with external Platform Services Controllers

1 third-party load balancer per site

2 vSphere Single Sign-On sites (Prod , DR site)

2 or more external Platform Services Controllers per Single Sign-On Site ( 2 in Prod, 2 in DR)

1 or more vCenter Server with external Platform Services Controllers

1 third-party load balancer per site

vCenter Upgrade / Install

First thing to be migrated or upgrading is the vCenter server, after that only ESXi and VM’s will be migrated. After the Topology is decided then the first thing to think of the the Upgrade path from existing vCenter server to the new One.

Upgrade from windows based vCenter to appliance is supported , But if it is small or medium environment suggested to build a fresh vCenter appliance based on the topology best suitable for your infra, with the same configuration for cluster, standard or distributed switch. For large environments with lots of distributed switches and port groups consider upgrade.

Upgrading vCenter server from 5.0 or 5.1 to 6.5 or 6.5 U1

Direct upgrade is not supported hence an intermediate upgrade to 5.5 or 6.0 any update as during the upgrade we need to consider that existing all ESXi hosts need to be supported by vCenter server.

Upgrading vCenter server from 5.5 or later to 6.5 or 6.5 U1

Direct upgrade is supported hence upgrade option can be used while installing new vcenter server it require an temporary IP while copying the data from old vcenter to the new vcenter.

Upgrading vCenter server from 5.x to 6.7

Direct upgrade is not supported hence an intermediate upgrade to 6.0 any update as during the upgrade we need to consider that existing all ESXi hosts need to be supported by vCenter server.

Upgrading vCenter server from 6.0 or later to 6.7

Direct upgrade is supported hence upgrade option can be used while installing new vcenter server it require an temporary IP while copying the data from old vcenter to the new vcenter.

Dont forget to notify / check the compatibility of your backup as most of the backup vendors integrate with vCenter server.

ESXi Upgrade / Install

Based on the upgrade path and hardware compatibility ESXi upgrade or fresh installation can be done. Its always good to do upgrade for existing servers as no need to do all configurations like vmotion, dns , Ip’s and all. For new Hardware of course fresh installation is the only approach.

- ESXi 5.0 or 5.1 with updates cannot be directly upgraded to 6.5 an intermediate upgrade to 5.5 or 6.0 is required.

- ESXi 5.5 or later can be directly upgraded to 6.5 or U1

- ESXi 5.x cannot be directly upgraded to 6.7 an intermediate upgrade to 6.0 is required.

- ESXi 6.0 or later can be directly upgraded to 6.7

New Servers : always use the latest OEM provided ESXi build CD as it will have all the Drivers necessary for the server. Minor updates and patches can be manually installed after installing ESXi with OEM provided custom built ESXi CD.

Old Servers for Upgrade: Its Better to use OEM custom CD for ESXi update, But as per experience its always good to update using the fresh ESXi image provided by vmware not OEM and install necessary supported drivers , updates and patches manually later. As OEM CD will always install the latest Drivers available however most of the old hardware may not support based on VMWare compatibility matrices.

So the Standard rules are below:

- verify the compatibility of the NIC, HBA and other for vSphere version.

- Install necessary supported BIOS and firmware version for hardware.

- Install/Update with OEM CD or fresh ESXi image from vmware.

- Check the correct version of drivers are present ( esxcli software vib list | grep -i driver_name )

- Install / Update Necessary compatible hardware drivers ( esxcli software vib install/update -d path_of_driver)

- Install necessary updates and security patches, i recommend using cli not update manager. cli takes few seconds to install.

Distributed switch migration

Distributed switch is a major thing to consider when it comes to migration, As most of migrations will happen without downtime.

Fresh Installation of vCenter without Upgrade.

In this case first we need to manually configure the distributed switch in the new vCenter server however there is export and import option available for distributed switch which is made easy to export the switch and import as all the port groups and config will appear as is in the new vCenter else a manual config is required.

In this case while migrating the ESXi host from Old vCenter server to new vCenter server below to be considered.

- Select a Jump ESXi host residing in Old vCenter server ( this will be used for all VM’s migration)

- Remove one physical uplink from distributed switch on each ESXi host or the Jump ESXi host (in old vcenter)

- Create a Standard switch and port groups with same vLan info as distributed switch with the physical uplink removed added to it.

- Migrate the VM’s to the Jump ESXi Host using vMotion (old vCenter)

- Migrate the VM’s from Distributed to Standard on the Jump ESXi host (old vCenter)

- Move the Jump host from Distributed switch in old vCenter.

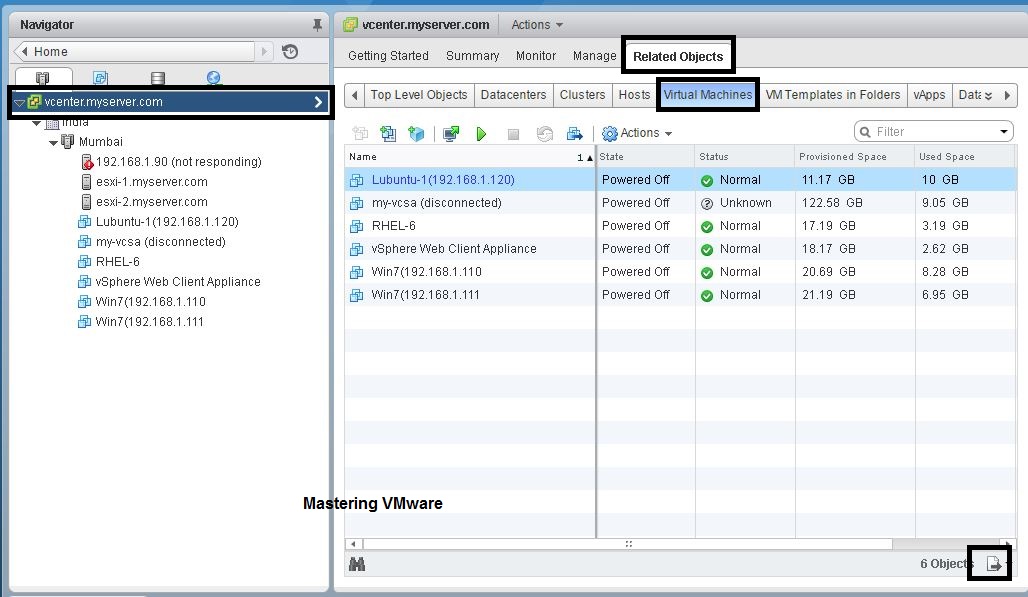

- Add the Jump ESXi host (Say ESXi 5.5) with VM’s running on it to the new vCenter server say 6.5 U1

- Jump Host ESXi host (Say ESXi 5.5) with VM’s running on it will be added to new vCenter say 6.5 U1 and shows as disconnected in old vCenter server say 5.5.

- Add the Jump host to Distributed switch in new vCenter.

- Migrate all VM’s on Jump host from Standard to Distributed switch.

- Move all VM’s from ESXi host say 5.5 to New ESXi 6.5 Hosts ( already added to DS) in new vCenter server.

- Disconnect the Jump host from New vCenter and add it back to Old vCenter , follow this until all VM’s are moved.

vCenter server Upgrade from existing VC 5.x.

In this case we no need to worry much as all the existing vCenter server 5.x information and configurations with ESXi hosts will come to the new vCenter server 6.x. Only thing to be considered is both Old ESXi hosts and New ESXi hosts are added to distributed switch after vCenter upgrade.

VM Migration

The important part during the migration is to make sure the VM is up and running. People always say no downtime, thanks to vmware migrations are easy. during the migration in most of the cases either of below or both will take place.

Migrating VM’s from Old vCenter to NEW vCenter ( no VC upgrade)

This is the case when a new vCenter server is built (not upgraded) and old vCenter is hosting the ESXi hosts and VM’s. As long as the vCenter supports ESXi version, we can add the ESXi host with VM’s running on it in the new vCenter server. This process will automatically disconnects from old VCenter and adds to new vCenter without interrupting the VM’s running on it.

If distributed switch is in use, move the VM’s from distributed switch to standard switch. this part is covered in Distributed switch section.

Note: when doing this if the ESXi host moved to new vCenter is having VM’s with RDM which are clustered with another VM. Try to move both hosts hosting the 2 Clustered RDM vm’s one after another and try to keep them on same vCenter server. Don’t leave one Clustered RDM VM on old vCenter and another on new vCenter. This will cause storage flapping issues.

Migrating VM’s between ESXi hosts of Different Versions under same vCenter server.

As long as both old version of ESXi host and new version of ESXi host are under the same vCenter server, Both hosts can host the VM resources like storage, RDM luns, Network port group and all. Its the matter of vMotion from the Old ESXi host to new ESXi host provided vMotion is configured. This way migration is possible even without a ping drop.

if distributed switch is there both old and new ESXi hosts needs to be added to the Distributed switch. If EVC mode is configured on the Cluster Hosting old ESXi host, make sure same EVC mode is configured on new ESXi host if wanted to do vMotion across them.

Things to verify:

- Old ESXi host ( say ESXi 5.5 ) and New ESXi Host ( Say 6.5 U1) have visibility to all Datastores, RDM LUNs where VM’s are hosted.

- Same Standard switch port groups are available on Old and New ESXi host.

- Old and New ESXi host joined to Distributed switchs VM’s are using.

- Same EVC Mode is configured on Cluster level of Old and New ESXi hosts for live vMotion.

Migrating VM’s with RDM (Physical / Virtual)

VM’s with RDM’s can also be migrated without downtime provided the destination host can see the RDM luns and the LUN’s where the pointers are saved.

However please note if the SCSI controller is shared by multiple RDM luns vMotion is not possible. Meaning to say suppose RDM lun 1 is on SCSI 1:0 and RDM Lun 2 is on SCSI 1:1 ports vMotion is not possible. This is the reason its always recommended to map each RDM lun with different SCSI controllers, like RDM lun 1 with SCSI 1:0 and RDM Lun 2 with SCSI 2:0.

Hope this post is useful, leave your suggestions and comments below.

I noticed a couple of people reported this problem in the last two months so I figured a blog post would be useful. This thread on VMTN triggered this article. If your ESXi host is disconnected from vCenter (even 5.0 and 5.1 appear to be impacted by this) and you see error messages in your log files about free space like these:

WARNING: VisorFSObj: xxxx: Cannot create file /var/spool/snmp/xxxxxxxx_x_

x_xxxx.trp for process hostd-worker because the inode table of its ramdisk (root) is full.

x_xxxx.trp for process hostd-worker because the inode table of its ramdisk (root) is full.

VmkCtl Locking (/etc/vmware/esx.conf) : Unable to create or open a LOCK file. Failed with reason: No space left on device

This could be caused by the fact that ESXi is running out of inodes. You can simply check that on the command line by using the following command:

stat -f /

The outcome of this command will look as follows:

File: “/”

ID: 1 Namelen: 127 Type: visorfs

Block size: 4096

Blocks: Total: 449852 Free: 324368 Available: 324368

Inodes: Total: 8192 Free: 55

ID: 1 Namelen: 127 Type: visorfs

Block size: 4096

Blocks: Total: 449852 Free: 324368 Available: 324368

Inodes: Total: 8192 Free: 55

As you can see the amount of “free” inodes is low and this is causing the experienced issues. In some cases it is reported (by vdsyn in this case) that “/var/spool/snmp/” is full and needs to be cleaned out. In this KB Article “/var/run/sfcb/” is explicitly called out and also explains what you can delete and how. So make sure to look at those two directories when an ESXi host is disconnected from vCenter.

In this latest post by TechHead guest contributor James Pearce he covers a topic near and dear to many of us – how to get VMware ESX/ESXi and its VMs to shut down gracefully upon power failure to the host. Tighter integration between a UPS and a VMware ESX/ESXi host is no doubt something that will become more mature over time though for now it can be an issue for many administrators especially those running the free version of ESXi. So read on to find out how James overcame this issue in his virtualization lab.

A Nearly Free UPS

I recently acquired an APC Smart UPS that was being chucked out from work (having never worked) for my home lab along with an ancient AP9606 management card. With the batteries changed the UPS burst into life – but after some messing about getting the right firmware on it, I was disappointed to find no easy way to get it to shutdown my ESXi box when it needed to.

VMware License Restriction

The VMware management appliance (vima) can shutdown only paid-for installations of ESXi (using apcupsd and VMware community member lamw’s scripts) – the necessary interfaces on the free version have been made read-only since ESXi v3.5 U3.

Burp Suite

I’ve been finding a lot of use recently for network sniffers, so thought I’d have a look at how the VMware vSphere Client works, as obviously that can shut down the host. As luck would have it, the client is nothing more than a glorified web browser with the slight complication that it’s talking over SSL – but that’s no problem for PortSwigger’s Burp suite in its transparent proxy mode.

The traffic captures revealed that only three frames would be needed to perform the shutdown (hello, authenticate, and shutdown). A little manipulation is needed to get the session keys in, but that is basically it. ESXi’s startup and shutdown policy will do the work suspending or shutting down individual VMs, as configured through the vSphere Client.

The Script – shutdown.bat

Using this newly found knowledge I’ve created a Windows batch file (with a few supporting text files which are basically HTTP requests) that takes the hostname, username and password as parameters and will then shut down the host cleanly. The script needs something to launch it – APC PowerChute Network Shutdown in my case – and a utility to send the commands over SSL, for which I’ve used Nmap ncat (which just needs to be installed).

I have put all the necessary script files into a single convenient zipped file which you can download from here – the scripts are fairly well commented so you should be able to follow what is happening.

APC PowerChute

A potential issue is that APC’s PowerChute Network Shutdown utility will always shut down the Windows machine it’s running on. I’ve therefore used a separate Windows management VM to host PowerChute and my script, since I wanted everything else just suspended.

PowerChute has an option to ‘run this command’ but it’s limited to 8.3 paths and won’t accept command line parameters. A separate batch file is needed (poweroff.bat) that runs the shutdown script with the parameters – but that could shut down other ESXi boxes as well if required. Also the PowerChute service needs to be run as local Administrator as the default Local System account doesn’t have sufficient rights.

Testing the Scripts

Download the ZIP and extract the files – I’ve assumed the package will be extracted to c:scriptsesxi; update the path in poweroff.bat otherwise. Also the hostname, username and password also need to be specified in poweroff.bat.

Next install and configure PowerChute (in particular change the service user account) and enter the script in the ‘run this command’ box – I also increased the time allowed, but in practice it runs in a few seconds.

Some waiting around can be avoided when testing by setting the UPS low-battery duration as high as it will go – just remember to change it back.

Next open up vSphere Client from a real machine, pull the UPS plug and once the battery get’s down to the specified number of minutes remaining, the script should run and the tasks will appear in vSphere Client. Shortly afterwards the VM used to launch the script will itself shutdown under the control of PowerChute!

In Summary

The complete set of files can be downloaded here, and nMap ncat installation for Windows from here. Then a UPS management application is needed, for APC Smart UPSs use PowerChute for Windows.

The shutdown script includes logging and should report most errors. Bear in mind though that once a host is shutdown, it probably won’t be restarted when utility power is restored.

Burp Suite is a handy utility to bypass device limitations by enabling the scripting of management tasks that are only usually available through a web interface. I’ve used it to build scripts to regularly reboot home-spec routers every couple of weeks to keep them stable, and to set the time on the APC AP9606 management card daily since it doesn’t support NTP – and here to build a UPS shutdown script for ESXi; functionality that should really be built into ESXi in the first place.

Technorati Tags: VMware,ESX,ESXi,APC,UPS,script,how to,shut down,vSphere